The Evergreen Guide To Facebook Ad Optimization

Chapter 2

Beginner’s Guide to A/B Testing Facebook Ads

Testing Is the Way to Success!

A/B testing on Facebook is used to experiment with different campaign elements to find out what works best.Sadly, in the real world, there’s no magical shortcut to knowing what’s the best-performing ad design, offer or target audience. And that’s when A/B testing Facebook ads enters the game.

Instead of one team member saying “We have to use a red ad design” and this being the end of the discussion, marketers can set up multi-variable tests to see which ad variations actually work.

In AdEspresso, we’re big fans of Facebook ad experiments and test new ideas every single month. You too can get started with split testing Facebook ads and set up your first A/B tests!

- What is A/B testing?

- How to set up A/B testing on Facebook?

- What are the best practices for Facebook A/B tests?

- How to set up an A/B test in AdEspresso?

- What is AdEspresso Grid Composer?

- What elements of a Facebook ad should you A/B test?

Ready to get even better Facebook ad results? Let’s get started!

What Is A/B Testing?

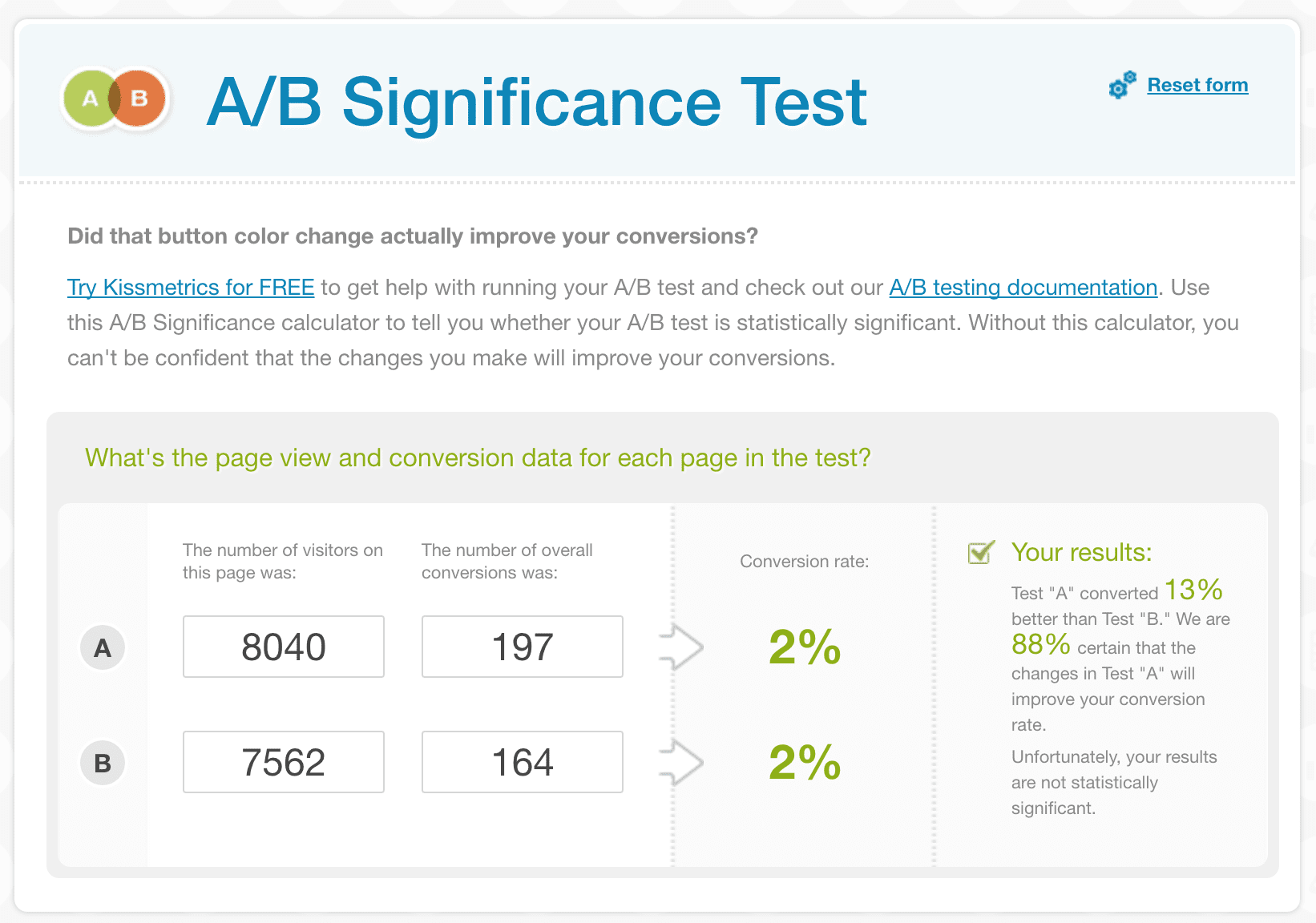

A/B testing, also called split testing, is a tactic by which you find out which ad headlines, body copy, images, call-to-actions, or a combination of the above work best for your target audience. Moreover, you can experiment with several Facebook audiences and ad placements to know who’s your perfect audience and which placements they can be reached with.Usually, the A/B tests are published for a couple of weeks, while the advertisers wait for new results to come in. After the experiment is completed, a conclusion will be made whether one option outperformed the other(s). You can ensure that your results are statistically significant by using a statistical significance calculator.

Unless you’ve already created a lot of Facebook ad campaigns for your product, it’ll be pretty hard for you to predict what kind of ad design will work better for you or which demographic audience will be more likely to buy your product. This is where A/B testing comes in handy: You can quickly test multiple ads’ designs and target audiences to uncover the most effective ones.

Let’s do an example, assuming we want to test two different designs for our eBooks’ lead generation:

| TEST 1 | TEST 2 |

|---|---|

|

|

| 10,000 Impression | 10,000 Impression |

| 237 Clicks (CTR: 2.37%) | 187 Clicks (CTR: 1.87%) |

| 28 Sales (Conversion rate: 11.81%) | 16 Sales (Conversion rate: 8.55%) |

| Spent: $150 | Spent: $150 |

| Cost per Sale: $5.35 | Cost per Sale: $9.37 (+75.14%) |

As you can see from the ads’ results, Ad 1 performed significantly better than Ad 2, resulting in a 77.14% higher ROI.

To put this in perspective, if you had published only a campaign with Ad n.2 and let it run on a larger scale with a $2,000 budget, you’d have wasted $858 generating only 213 sales versus the 373 sales you’d have in your pocket using Ad n. 1!

A/B testing helps you uncover the best-performing solutions and optimally use your ad budgets.

How to Set Up Facebook A/B Testing

As you get started with Facebook advertising, you’ll realize that there are so many things you’d like to test: ad images, copy, target audiences, bidding methods, campaign objectives, etc.The rookie mistake you’re likely to make at this point is creating an A/B test with too many changing variables.

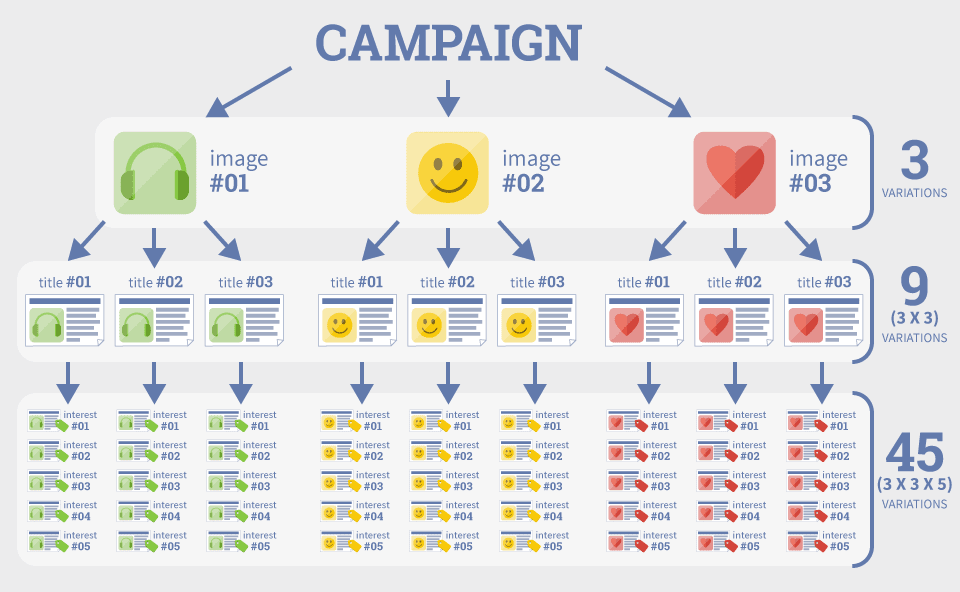

Let’s say you want to test 3 ad images, 3 headlines, and 3 main copies. This makes 3x3x3 = 27 different Facebook ads. This test will take weeks to conclude. A test can quickly become huge! Let’s assume you want to test five images, four ad titles, and five precise interests targeting. This means you’d have to create 4*5*5 ads to test all the possible combinations — a total of 100 ads!

Setting up 100 or even 20 different Facebook ads would take hours… And of course, you’re smarter than that!

This leaves you with two options: Either create smaller experiments with four or five variations OR use an external Facebook ads managing tool specifically designed for A/B testing (shameless plug: AdEspresso could be a good solution — and we offer a free 14-day trial!).

Overall, once you start dealing with a budget higher than $2,000 per month, our suggestion is to start using an external Facebook ads managing tool that will make your life easier by saving both time and money.

Setting Up Facebook A/B Testing in AdEspresso

The easiest way to set up A/B testing on Facebook with multiple variations is by using AdEspresso. Seriously – that’s one of the main reasons marketers all around the world love using AdEspresso ad manager tool.

To set up a new A/B testing campaign, log in to your AdEspresso account and click on “New Campaign” in the top menu.

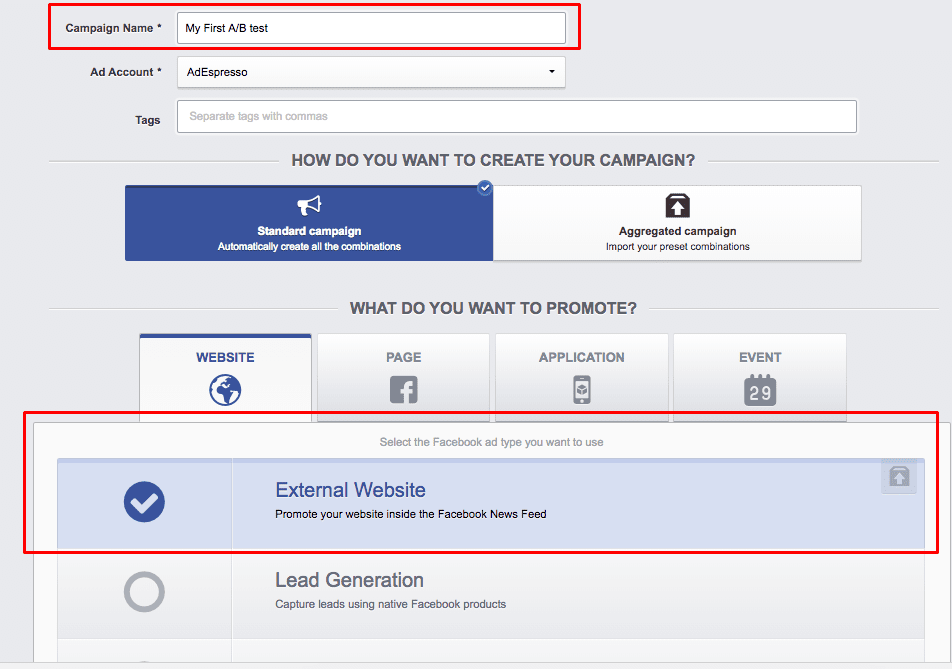

AdEspresso offers two distinct ways to create a split test in your Facebook campaigns, we’re going to review how to do both.

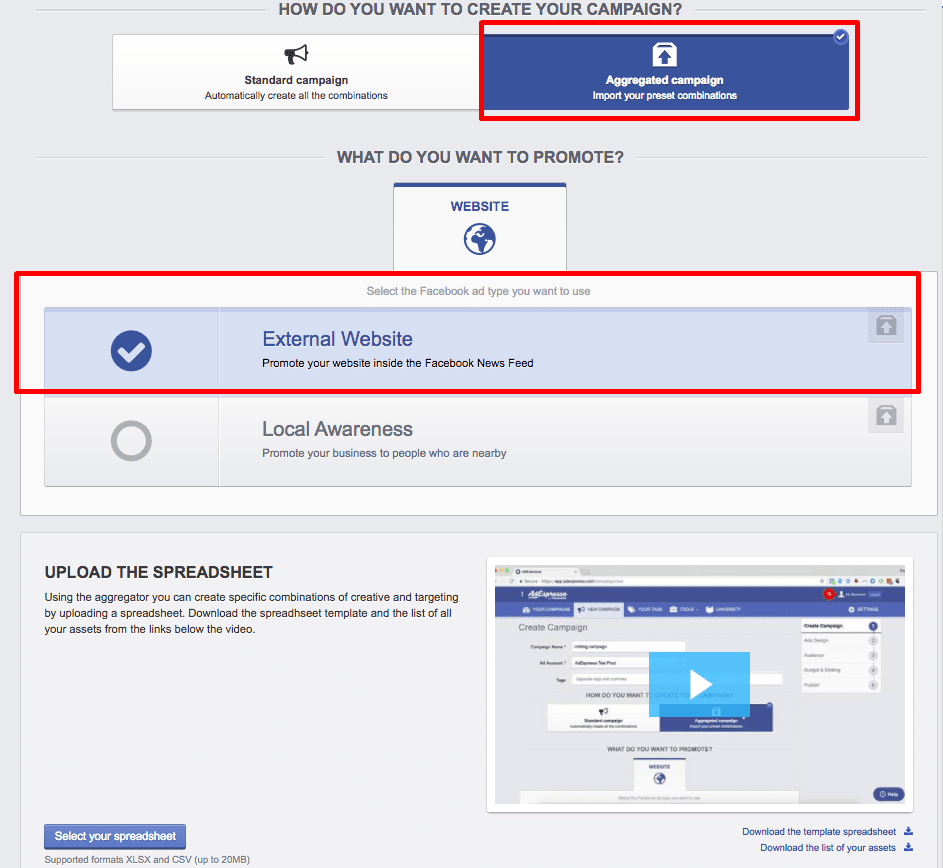

If your goal is to drive people to your website, select the “External Website” promotion option..

You can opt to create a “Standard” campaign in AdEspresso, where all of the different combinations of ads will be generated for you automatically based on the elements you are split testing.

The other option would be to create an “Aggregate” campaign, where you test preset combinations of ads based on elements you have added into a spreadsheet that you upload to AdEspresso.

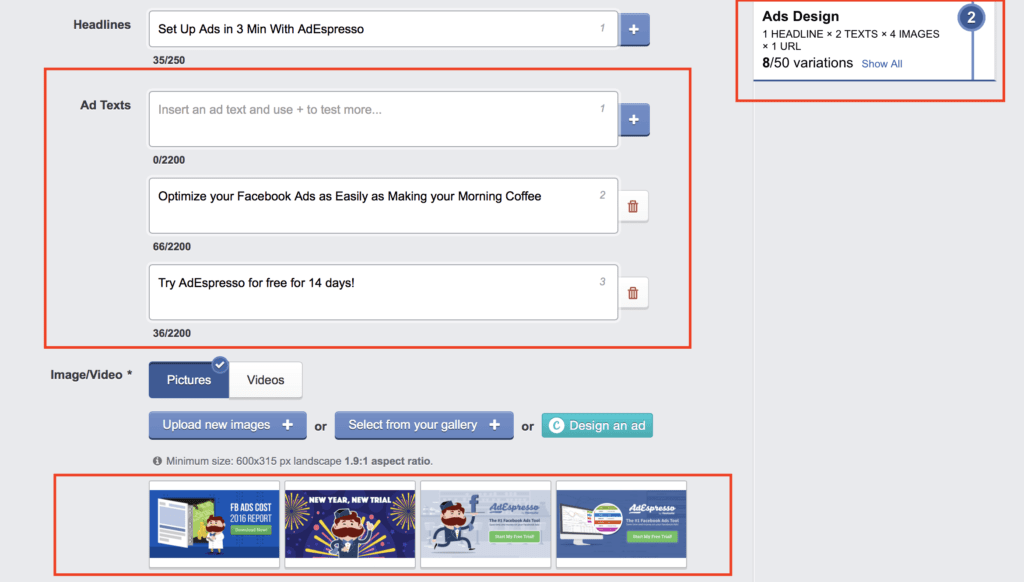

In the next phase of standard campaign setup, you can enter several ad copy variations, headlines, different ad images/videos, etc. and AdEspresso will create every possible combination of ads for you based on the elements you opted to test on.

For example, you could split test two different ad copies and four Facebook ad images, resulting in eight different Facebook ad variations.

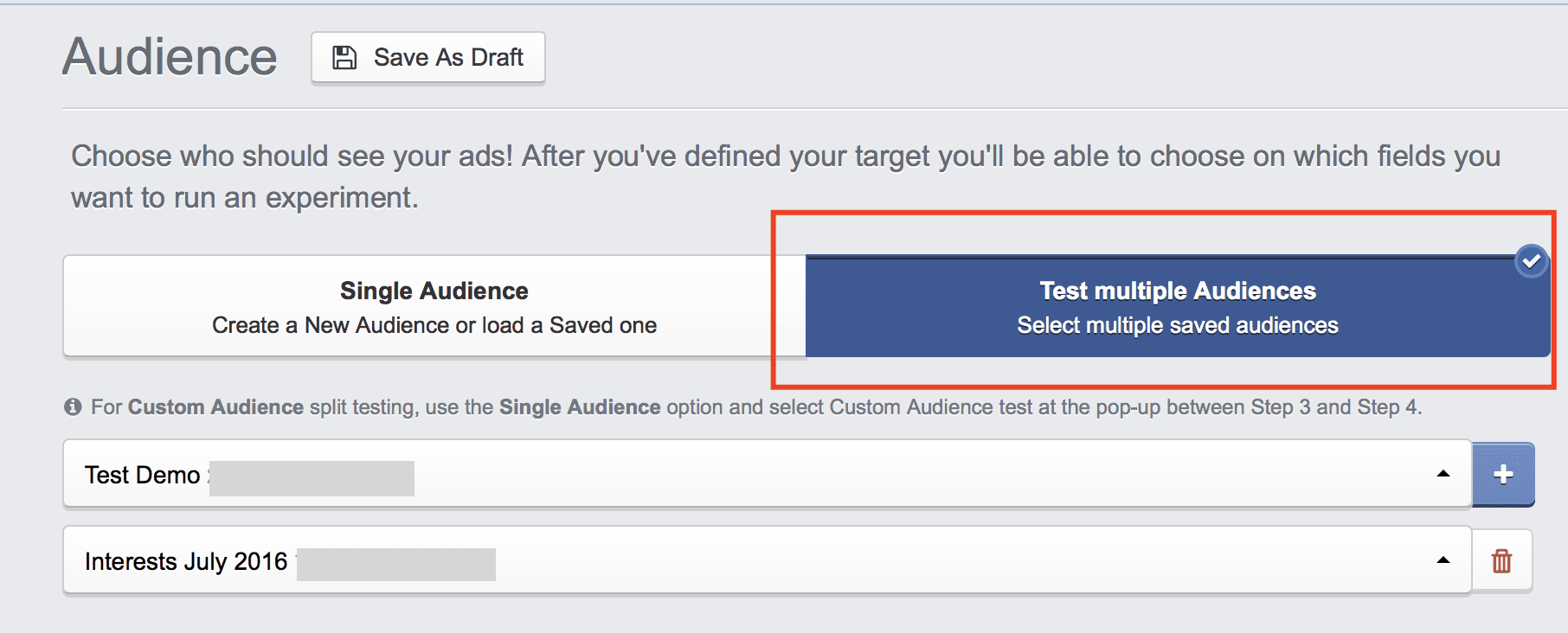

After you’ve finished adding new ad elements and variations, hit the “Proceed” button. In the third step of your A/B test setup process, you can select one or multiple Facebook audiences you’d like to target.

You can also split test multiple Facebook audiences.

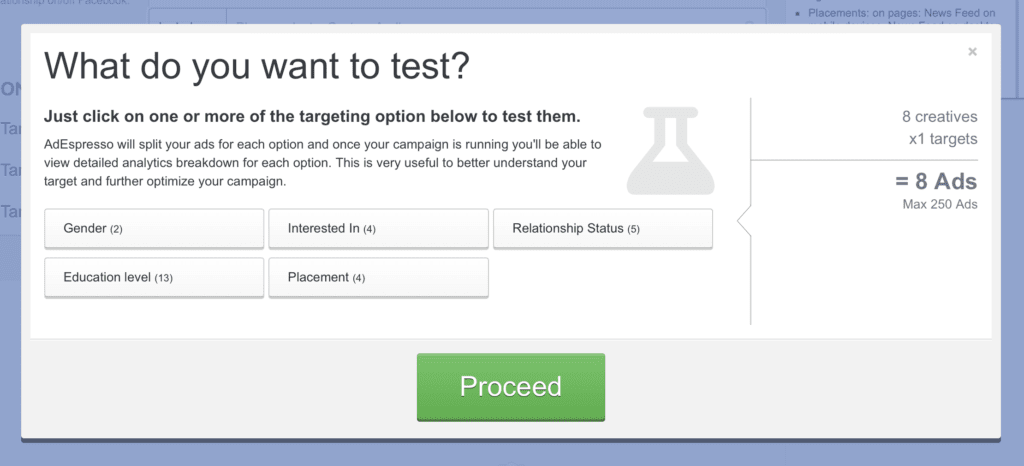

And just when you thought it couldn’t get any better… Before you proceed to the Bidding and Ad Schedule setup, AdEspresso will offer you additional options for A/B testing!

Once you’ve set up all your Facebook ad campaign’s elements, it’s time to hit the “Publish to Facebook” button and start waiting for the results to come in.

If you’d like to learn more about Facebook ads tracking, measuring, and evaluation, read chapter 8 of our guide, you’ll dig deep into Facebook Ads Reporting and Optimization.

Grid Composer, A/B Testing on Steroids

Let’s create our campaign using the Grid Composer, a brand new feature we released in 2018 to offer even more flexibility to create your a/b tests for Facebook ads.At Step 1 of campaign creation, instead of working on a “Standard” campaign lets select the “Aggregate”.

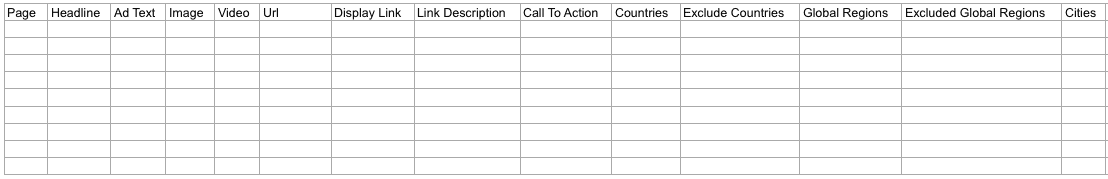

We can create an external website campaign or local awareness with the grid composer. Once selected, you’ll be able to select the spreadsheet you wish to use for the campaign.

AdEspresso offers a downloadable template you can use to customize your spreadsheet with your own information.

Once you finish your spreadsheet and upload it into AdEspresso, the fields you have in your spreadsheet will be greyed out in the campaign creation. If you left any elements empty in the spreadsheet, you’ll be able to choose them during campaign creation. This provides an easy way to match specific headlines, ad texts, images, video, and any other element of your creative or targeting into the campaign without creating any unwanted combinations.

AdEspresso also has another powerful way to create specific combinations of ads without needing to fill out a spreadsheet, and this is called ad templates and they can be created within your Asset Manager.

The asset manager is accessible from your Tools Box and from there you can click on “create new asset” in the top right corner.

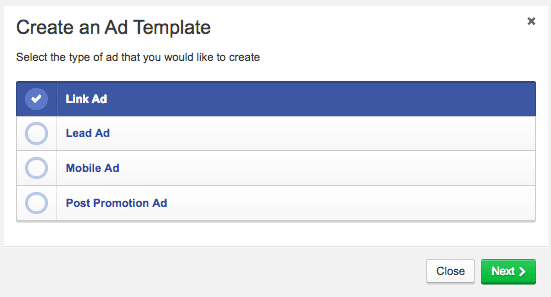

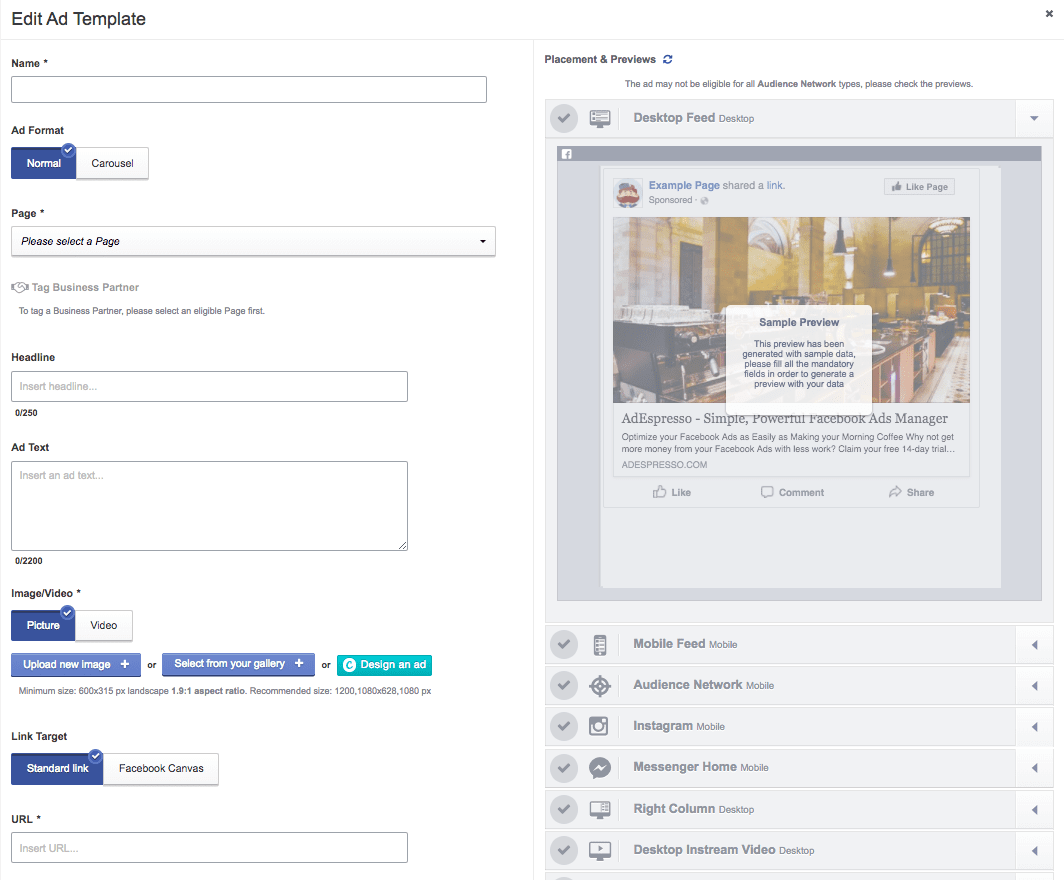

You’ll see a pop-up that allows you to create a specific kind of ad template, you can choose from a link ad, lead ad, mobile ad or a post-promotion ad.

We’re going to create a link ad template.

After selecting your ad template type, you’ll have a pop up that displays the creative elements of your ad template. You can choose the ad format, headline, ad text, creative, etc of your ad template. Just design the ad template, save it and you’ll have an ad template with all the creative elements you need available to use is any campaigns later on!

Now, when we create our new campaign and reach Step 2, we have the option to select “Test ad templates” and from here we’ll be able to use our ad templates in a new campaign. This allows us to split test a video, static image and carousel ad within the same campaign!

5 Rules of A/B Testing Facebook Ads

If you wish for your Facebook A/B test results to be statistically relevant and applicable across multiple campaigns, there are a couple of rules you should follow. Otherwise, you might get too caught up with all the testing ideas and forget about professional campaign setup and measurement practices.Facebook A/B Testing Rule #1:

Test One Variable at a Time

While it might be tempting to start testing EVERYTHING at once, think about it:

The fewer ad variables you have, the quicker you’ll get relevant test results.

Moreover, testing a single ad variable per experiment will make it easier to track and evaluate the result.

Facebook A/B Testing Rule #2:

Use the Right Facebook Campaign Structure

When testing multiple Facebook ad designs or other in-ad elements, you’ve got two options for structuring your A/B testing campaigns:

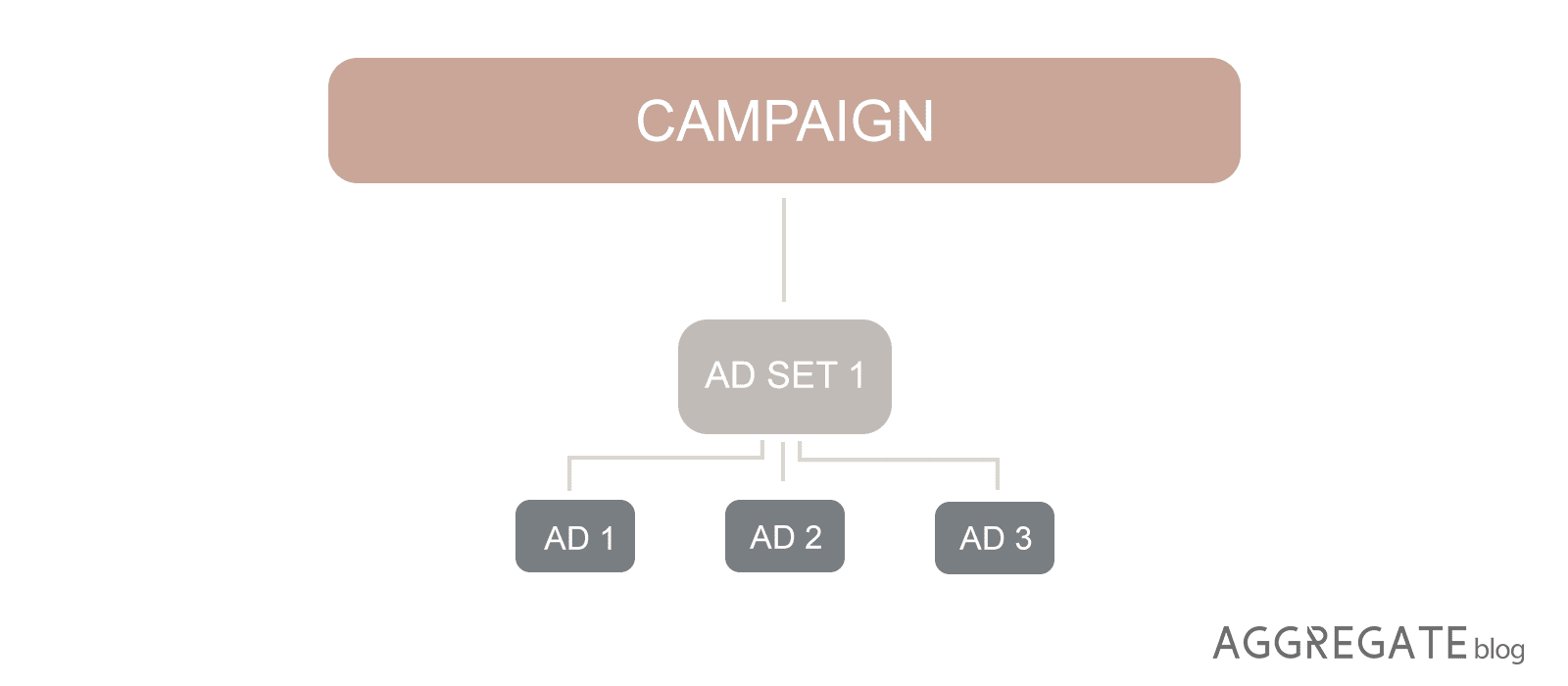

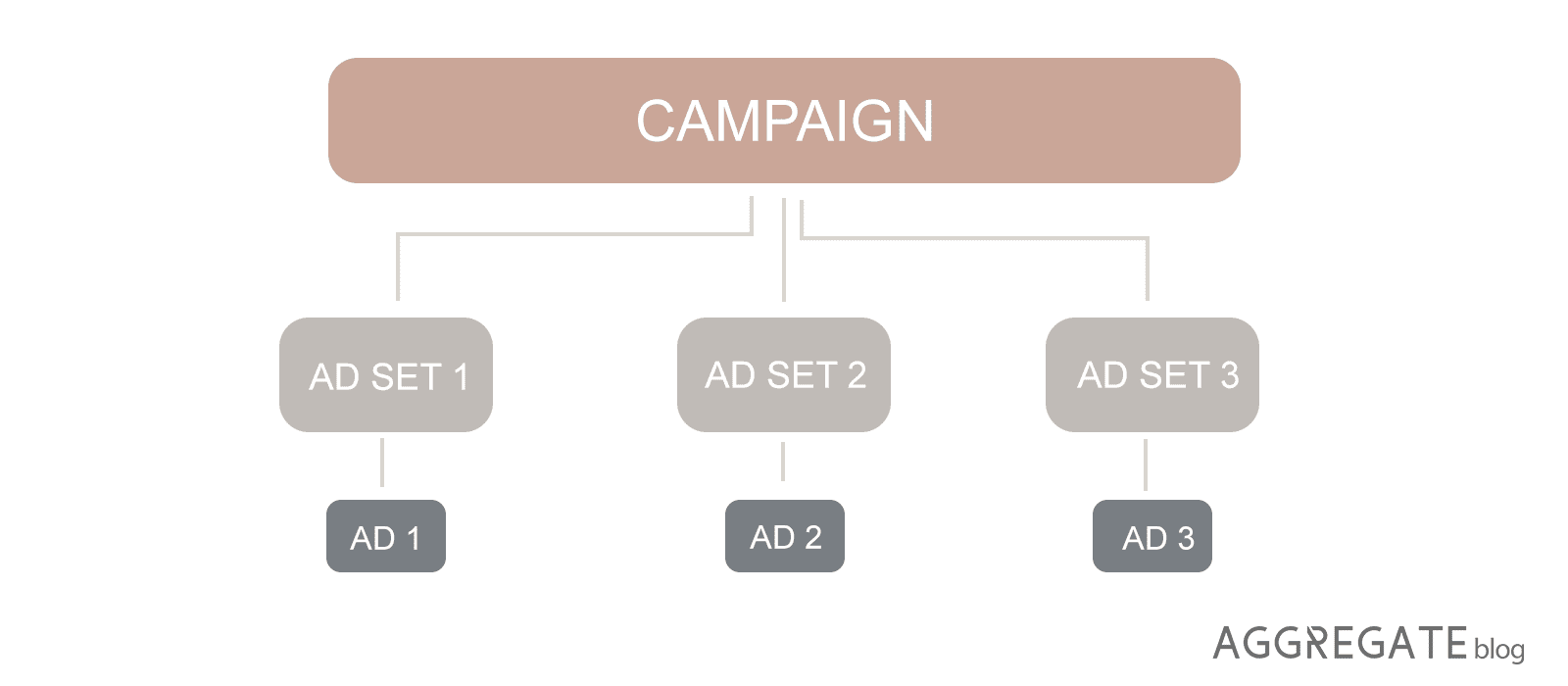

- 1. A single ad set — all your ad variations are within a single ad set

- 2. Multiple single-variation ad sets — each ad variation is placed in a separate ad set

As you place all your tested Facebook ad variations in a single ad set, Facebook will start to auto-optimize your ads, and you won’t get relevant testing results.

You should opt for the second campaign structure, where all your ad variations are placed into separate ad sets.

Facebook A/B Testing Rule #3:

Ensure Your A/B Test Results Are Valid

How do you know when’s the best time to analyze your split test results and conclude the experiment?

To ensure that your A/B tests are valid, you need to have a sufficient amount of results to conclude from.

If you want your Facebook tests to give valuable insights, put them through an A/B significance test to determine if your results are valid.

Facebook A/B Testing Rule #4:

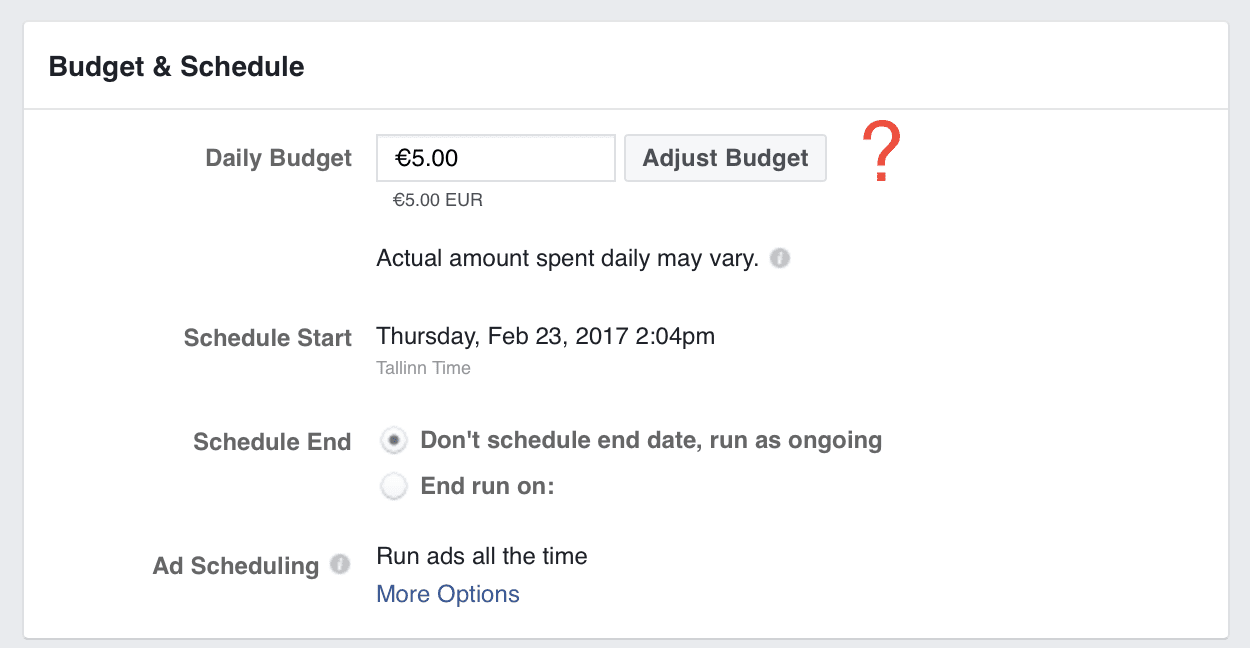

Set a Sufficient A/B Testing Budget

The more ad variations you’re testing, the more ad impressions and conversions you’ll need for statistically significant results.

So how much will it cost to run a successful Facebook ad test?

To get valid A/B test results, you’ll need at least 100 conversions per each ad variation. If your cost-per-conversion is $2.50 and you want to test 4 different ad variations, your testing budget should be around $2.5 x 4 x 100 = $1,000.

Tip: When one of your split test variations is outperforming others by a mile, you can conclude the experiment a little sooner. (However, you should still collect at least 50 conversions per each variation.)

Overall, the general suggestion is not to overdo it. It’s pointless to create hundreds of experiments inside a single campaign unless you have thousands and thousands of dollars in the budget. Start with micro experiments, testing only a few elements and give them a reasonable budget.

Facebook A/B Testing Rule #5:

Prioritize Your Facebook Ad Tests

When searching for Facebook A/B testing ideas, think which ad element could have the highest effect on the click-through and conversion rates. After all, your testing capacity will be limited both by time and resources. You could even set up a prioritization table to decide which ad elements you’re going to test first.

What Facebook Ad Elements Should You A/B Test?

In AdEspresso, we studied data from over $3 millions worth of Facebook ads experiments and listed the campaign elements with highest split testing ROI:- Countries

- Precise interests

- Facebook ad goals

- Mobile OS

- Age ranges

- Genders

- Ad designs

- Titles

- Relationship status

- Landing page

However, the best A/B testing options really depend on what your goals are.

Up next, you’ll find 12 ideas for your Facebook A/B testing.

-

A/B test your Facebook audiences

-

A/B test your Facebook ad types

-

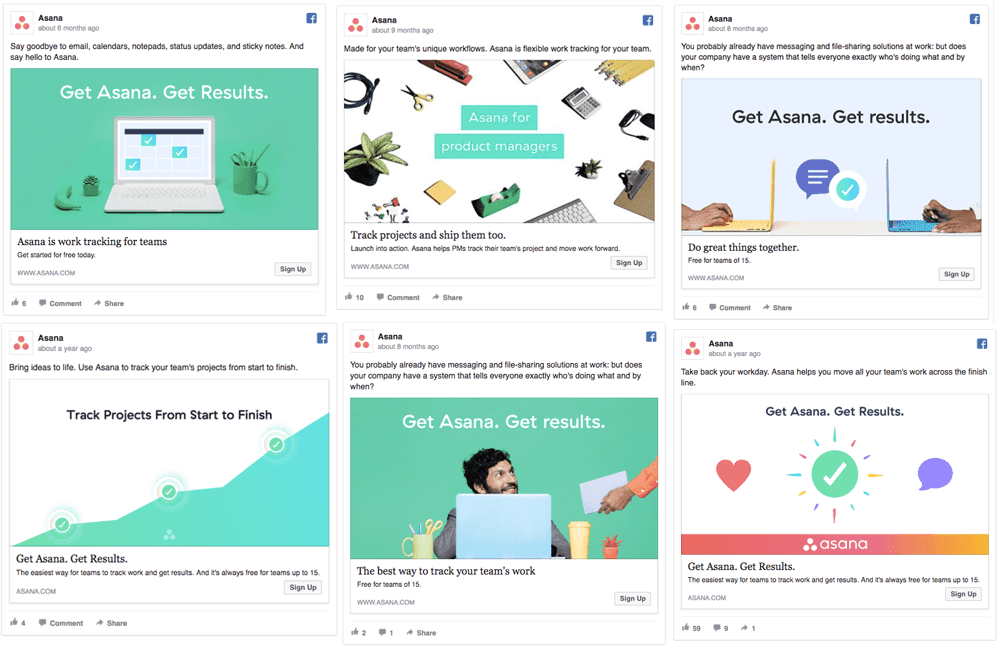

Split test your Facebook ads images

-

Split test stock photos vs.custom illustrations

-

Split test images vs. videos

-

A/B test your Facebook ads’ value proposition

-

A/B test your Facebook ads headline

-

A/B test your Facebook ads copy

-

Experiment with different bidding methods

-

Split test your Facebook ads landing pages

-

Split test different campaign objectives

-

Split test your Facebook ad placement

As you might imagine, the opportunities for testing are almost endless. By the time you’ve completed the first round of tests, it’s time to re-start with another one to get fresh insights.

Additional reading:

If you want to know more Facebook ads A/B testing ideas, see our recommended guides that will get your creative juices flowing.

10 Burning Questions That You Can Answer by A/B Testing Your Facebook Ads